In this blog post we’re going to do a couple of things. We’re going to explore object based analytics - what they are, why they exist, and the most common types found on the market today. We’re then going to look at the Advanced Object Search capabilities of Nx Meta VPaaS and Nx Witness VMS, including how the feature works on a real system.

What are object-based video analytics?

Object-based video analytics are driven by deep-learning and AI-enabled algorithms created using human annotated data sets to train neural networks. These algorithms were first developed in order to be able to detect objects in images. As a video is just a stream of images, they can also be used to detect objects - people, bags, vehicles, clothing, eyeglasses, hot dogs (or not hot dogs), and more - in video streams.

Why do object-based video analytics exist?

The short answer is - deep-learning trained inference engines (aka AI algorithms or computer vision applications) work!

In the past, video analytics - which were primarily based on changes to pixels, like motion detection - were often too generically implemented to work effectively across a broad range of environments when trying to detect specific events. If motion is the primary input that triggers an algorithm’s response, and motion is determined by simple value changes at the pixel level, then an algorithm is susceptible to lighting changes, or scene changes caused by variables such as wind.

Object-based analytics, on the other hand, based on pre-trained neural networks (aka inference engines) have a high degree of accuracy and work well in different lighting conditions and environments as the networks are trained to recognize these objects across a wide range of conditions - angles, lighting, environmental influences, etc.

And because of their dependability and reliability, Object-based analytics are able to provide a simple, more dependable way for users to do two key things: create rules to automate alerts and other system actions, and search through video archives quickly using metadata tags (aka object attributes).

What are the most common types of object-based video analytics?

Detecting Different Types of Objects

There are a lot of object-driven video analytics out there these days. But if you take a step back and look at them as a whole, they were created to be able to identify many of the same things security personnel are trained to observe - people (including their clothing), vehicles (including and their unique identifying traits), text, weapons, and bags. More advanced algorithms can detect specific behaviors that involve a combination of people and vehicles.

In-Camera Analytics | Server-Based (aka On-Prem) | Cloud

There are also different ways these computer vision applications are deployed. The more complex the algorithm, the more computational power it will need. Simple algorithms - like face detection or person detection - generally do not require a large amount of processing power to accomplish.

In-Camera Object-Based Video Analytics

As cameras continue to evolve and are built with more powerful chips, more RAM, and bigger onboard storage, many object-based analytics are now built into cameras. We at Nx call these in-camera analytics. In-Camera Analytics have a big advantage over on-prem (server based) analytics in that they do not require any additional hardware or software when designing a system. They are self-contained - the camera detects and analyzes video in one compact package. This makes in-camera object-based video analytics easy to deploy and use in real-world systems. In-Camera Object-based video analytics are typically reliable, work in real-time, are secure as they remain inside secured LAN / WAN environments, and will continue capturing analytics even when Internet connectivity fails.

Server-Based (On-Prem) Object-Based Video Analytics

In addition to in-camera based object-based video analytics, many companies have also developed object-based analytics that rely on NVIDIA GPUs and larger Intel CPUs or specialized chips such as those from Hailo. These server-based analytics are installed on the same LAN or WAN as cameras and they typically pull in RTSP streams from cameras, analyze them, and then return metadata to a VMS. This is accomplished with Powered by Nx products created with Nx Meta using our Metadata SDK.

The positives for on-prem and server-based analytics are that they have the ability to do more advanced analytics, and can be deployed on top of existing systems where high resolution IP cameras already exist. Server-Based (On-Prem) Object-based video analytics are also typically reliable, work in real-time, are secure as they remain inside secured LAN / WAN environments, and will continue capturing analytics even when Internet connectivity fails.

Cloud-Based Object Video Analytics

And finally, we come to Cloud-based object video analytics. Cloud-based analytics offer many of the same features as In-Camera and Server / On-Prem object based video analytics with one exception - they require an Internet connection in order to function properly. This is a big difference between other object-based video analytics deployment models.

The positives? Cloud-based analytics - if designed properly - scale to accommodate any number of streams with the assumption that there are no bandwidth constraints due to the speed of Internet between the cameras / VMS and the Cloud-based analytics.

Advanced Object Search in Nx v5

A Standardized Way to Search Video for all Object-Based Analytics

Here at Nx, we are always looking for ways to create a better, more uniform way for our users, developers, and customers to take advantage of emerging technologies like object-based video analytics. In v4 of Nx Meta / Nx Witness VMS we created the Metadata SDK and Object-Overlays for the Nx Desktop client (available in Nx Meta and Nx Witness VMS). The Metadata SDK is a tool that allows any object-based video analytic to be integrated with any Powered by Nx product rapidly. Once integrated objects detected are imported into a special object database and indexed to allow the rapid recall of any associated footage. Additionally, Nx has native support for in-camera analytics from leading manufacturers like Bosch, DW, Hanwha, Vivotek, and more!

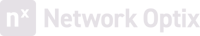

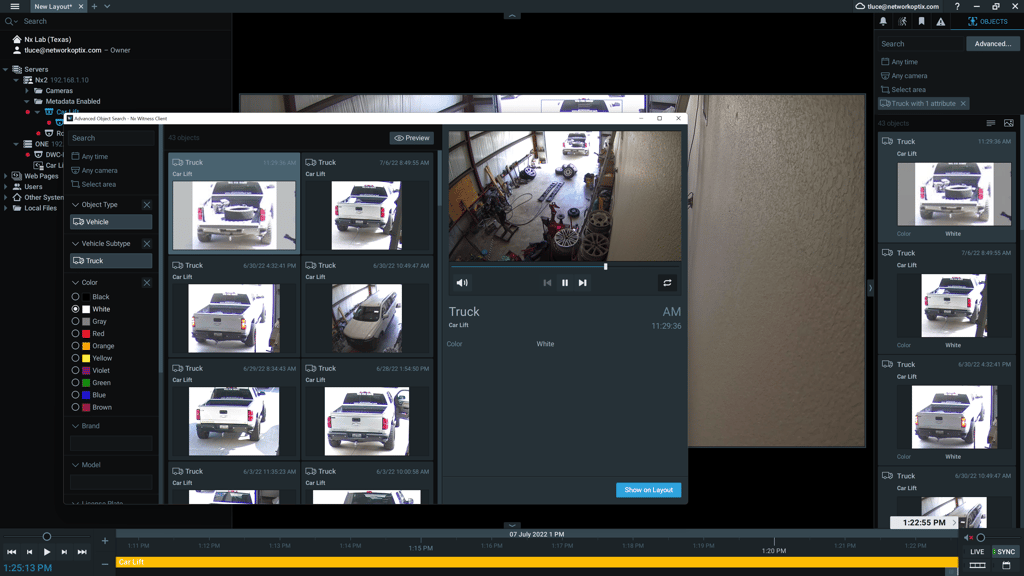

In v5 we’re extending that capability with Advanced Object Search - a new way to quickly and easily search video archives using captured object metadata. Designed as a pop-out window, the new feature allows operators to do two things at once - keep an eye on live video AND perform detailed searches of objects using their associated attributes from captured metadata.

This is a fundamentally different approach than most competitive, traditional VMS products. While those solutions often have different processes and user experiences depending on the integrated computer vision / object-based video analytics application, the way that objects are captured, displayed, used to create system automations, and searched is always the same in any Powered by Nx product.

How Advanced Object Search Works

Watch the Youtube video embedded below to learn more about our new Advanced Object Search features in Nx v5.